The Context Challenge in Healthcare AI

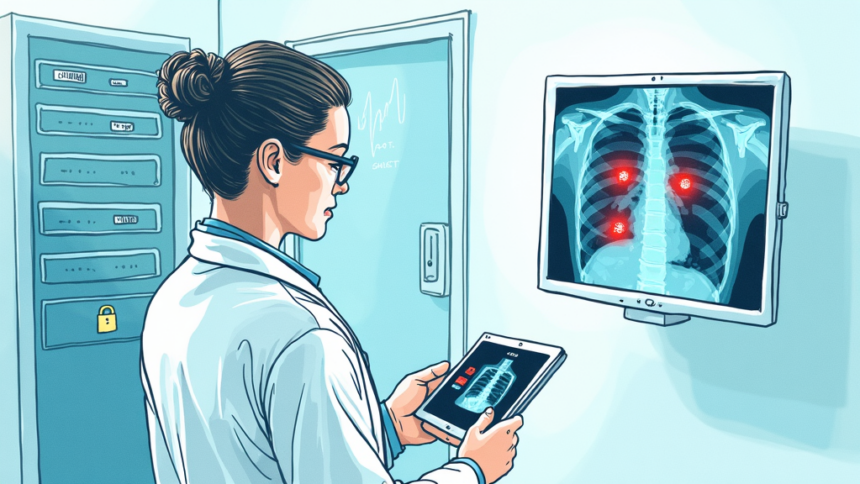

Artificial intelligence systems in healthcare are not inherently secure. Their safety depends heavily on the context in which they operate and the controls placed around them. A model trained on general medical data may fail or produce harmful outputs when applied to a different patient population or clinical workflow. For example, an AI tool used for radiology image analysis may perform well on adult scans but produce unreliable results for pediatric cases if not properly contextualized. This is especially critical in healthcare, where a misdiagnosis or delayed alert can directly impact patient safety. The core principle is that AI security is not just about protecting the model but about ensuring it behaves correctly in every specific use case.

Implications for Hospital Security and Compliance

For hospitals and health systems, this means security teams must go beyond traditional vulnerability management. They need to implement strict access controls, continuous monitoring of AI outputs, and validation protocols tailored to each clinical deployment. A hospital’s AI system might be a clinical decision support tool that integrates with the EHR. If an attacker manipulates the input data, an incorrect drug dosage recommendation could result, violating HIPAA’s integrity requirements for ePHI. Healthcare CISOs should establish a governance framework that includes regular audits of model performance, data lineage checks, and rapid rollback procedures. This approach not only protects patient data but also maintains trust in AI driven care, a key concern for healthcare compliance officers navigating FDA guidance on software as a medical device.

What This Means for Healthcare Organizations

Ultimately, AI security in healthcare hinges on maintaining both context and control. Context ensures the AI understands the specific clinical environment, patient demographics, and regulatory boundaries. Control ensures that humans remain in the loop to override or correct AI decisions, especially in high risk areas like ICU monitoring or surgical robotics. A practical step is to deploy AI models in isolated environments first, validate their outputs against human decisions, and only then integrate them into workflows. By prioritizing context aware security, hospitals can harness AI’s potential without compromising patient safety or running afoul of HIPAA’s security rule.

Source: https://www.healthcareinfosecurity.com/ai-security-hinges-on-context-control-a-31268